3 Minutes

Huawei Cloud announced a dramatic increase in its AI computing capacity at the China International Big Data Industry Expo in Guiyang. CEO Zhang Ping'an reported that the company’s total compute resources have grown 250% year-over-year and that Ascend AI Cloud customers have surged from 321 to 1,714. The expansion signals Huawei’s accelerated investment in AI infrastructure to meet rising demand for model training, large-scale inference, and enterprise AI applications.

Network Architecture and Supernodes

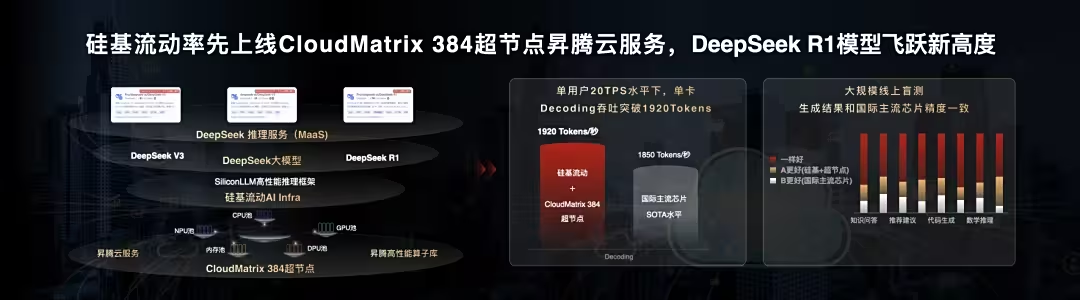

CloudMatrix 384 and a nationwide cluster

Huawei is rolling out a so-called "black soil of computing power" across multiple hubs—Gui'an, Ulanqab, Horqin, and Wuhu. The Gui'an site hosts the CloudMatrix 384 supernode, integrating 384 Ascend NPUs with 192 Kunpeng CPUs. Huawei claims that a single CloudMatrix 384 can deliver roughly 300 PFlops of peak performance and train more than 1,300 large-scale models simultaneously. With 432 supernodes online, the network forms an approximately 160,000-card AI cluster that has been operating stably for over two years.

Product Features

Huawei’s platform blends high-density Ascend NPUs, Kunpeng CPUs, optical networking, and optimized power management to boost space and energy efficiency. Key features include:

- High-throughput NPU acceleration for training and inference

- Scalable supernodes designed for parallel model training

- Integrated networking and optical links for low-latency communication

- Energy and rack-level power optimization to reduce TCO

Comparisons and Market Position

While global hyperscalers such as AWS, Microsoft Azure, and Google Cloud dominate many international markets, Huawei Cloud is narrowing the gap in AI infrastructure domestically and targeting select international customers. The launch of the Wuhu supernode in April emphasizes support for global workloads and China’s East-West Computing initiative, which redistributes computing tasks regionally to optimize latency and cost.

Advantages and Use Cases

Huawei highlights financial services as an early adopter: Gui'an and Ulanqab hubs reportedly support more than 1,000 daily AI-driven financial applications. Other use cases include large-scale model training, generative AI workloads, recommendation systems, and cross-regional enterprise deployments.

Market Relevance and Strategic Impact

This expansion reinforces Huawei’s bid to be a major AI infrastructure provider. By combining hardware, networking, optical communication, and power management, Huawei aims to deliver competitive performance-per-watt and meet enterprise demands for higher throughput and lower latency.

Do not remove, replace, or modify any of the original images from the source content — keep all image placements, captions, and formats exactly as they are.

Conclusion

Huawei Cloud’s 250% compute growth, large-scale Ascend-powered cluster, and focus on efficiency position it as a rising alternative for AI infrastructure—especially within China and for customers seeking regionally optimized compute under the East-West Computing strategy.

Source: gizmochina

Leave a Comment